As reported in the Forbes article “The Role Of Bias In Artificial Intelligence“, facial recognition systems are under scrutiny. Class imbalance is a leading issue in facial recognition software. A dataset called “Faces in the Wild,” considered the benchmark for testing facial recognition software, had data that was 70% male and 80% white. Although it might be good enough to be used on lower-quality pictures, “in the wild” is a highly debatable topic.

Apart from algorithms and data, researchers and engineers developing any system are also responsible for bias. According to VentureBeat, a Columbia University study found that “the more homogenous the [engineering] team is, the more likely it is that a given prediction error will appear.” This can create a lack of empathy for the people who face problems of discrimination, leading to an unconscious introduction of bias in these algorithmic-savvy systems.

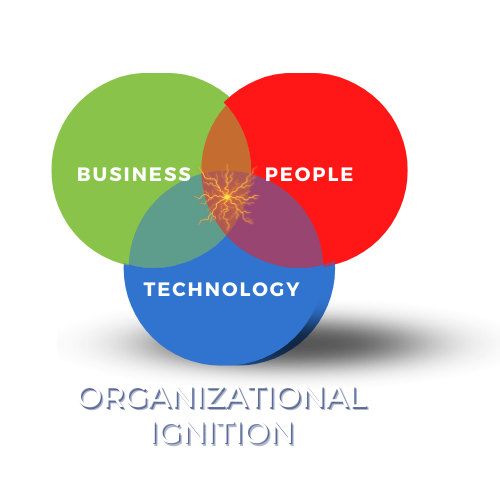

So, how can we eliminate the negative impact of bias in the use or development of our technology? Come to this IEEE session led by James McKim to gain insights into this persnickety challenge.

Date and Time

- Date: 19 Oct 2022

- Time: 05:00 PM to 07:00 PM